Bereaved families urge Government to expand online safety laws

Five bereaved families will on Monday urge ministers to introduce new laws to give parents of children whose deaths are linked to social media use access to their accounts.

The group are backing an amendment to the Online Safety Bill that would force tech giants to unlock the data - or face multi-million pound fines.

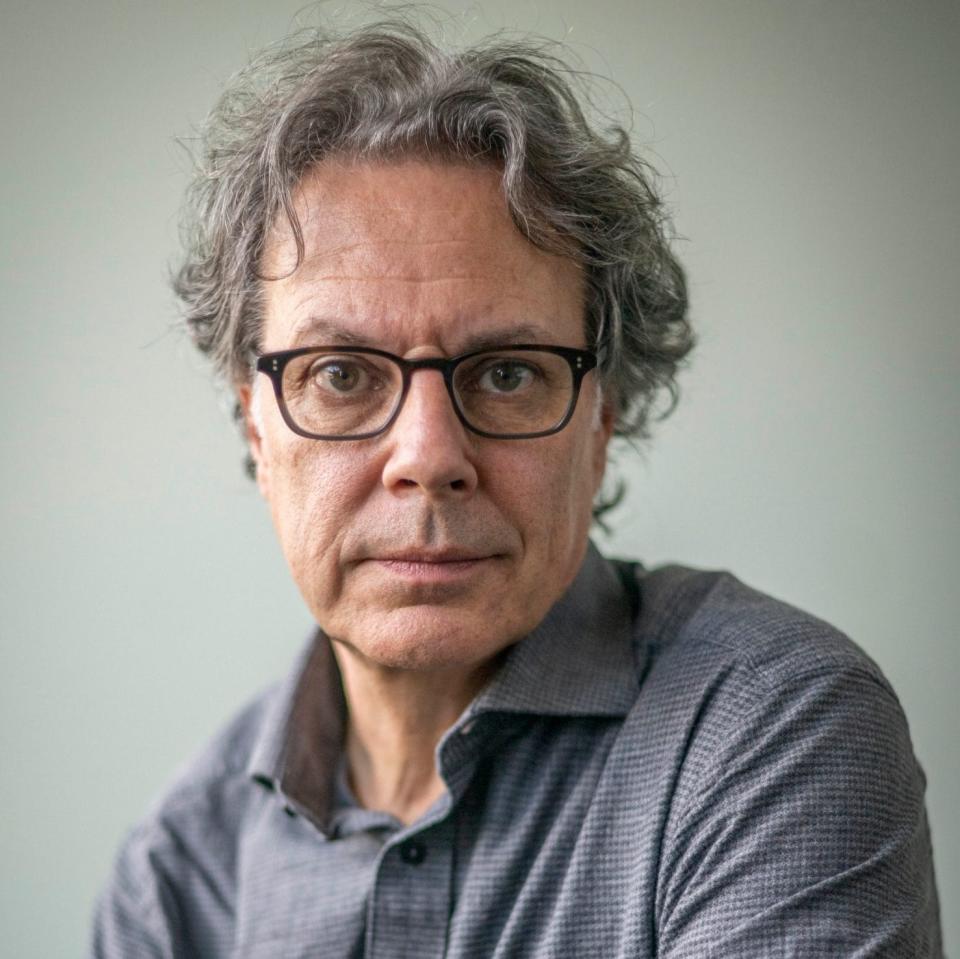

The five - including Ian Russell, whose daughter Molly, 14, took her own life after being bombarded with self-harm and suicide content - will set out their demands in Westminster as the Bill returns to the Commons on Monday after a six-month delay.

Mr Russell, who fought for five years to uncover the 16,000 “destructive” posts of self-harm, anxiety and suicide that Molly received in the six months before her death, said: “We can no longer leave bereaved families and coroners at the mercy of social media companies.

“There is a dire need for managing this process to make it more straightforward, more compassionate and more efficient. The experience of living through Molly’s prolonged inquest, is something that no family should have to endure.”

Amendment to ensure social media firms hand over data

It comes after ministers last week unveiled the revised version of the Bill, including moves to force social media firms to bar under-aged children or face multi-million pound fines.

In an interview with The Telegraph, actress Kate Winslet warns of the impact on teenagers of social media, as she is set to star in a new drama about the issue alongside her real life daughter Mia Threapleton.

The amendment to the Online Safety Bill - tabled by online safety campaigner Baroness Kidron - would give watchdog Ofcom powers to ensure that bereaved parents and coroners get access to social media firms’ data if it was suspected that it had played a part in a child’s death.

A new duty would require social media companies to hand over all “relevant” content which a dead child had “viewed or otherwise engaged with” and within a timeframe set by the coroner.

The bereaved parents claim social media firms “obstructed” many of their attempts to secure data to reveal who their children contacted, how multiple posts were driven to their accounts by algorithms, and what graphic content - including instructions on committing suicide - they viewed.

They are backed by Baroness Morgan, former culture secretary, who said: “Losing a child is every parent’s worst nightmare. But to then have to fight for years against intransigent tech platforms just to find out the type of content which was being accessed can only add to the trauma.

“I am delighted to support Baroness Kidron’s amendments and really hope the Government will work with us to ensure these changes are made to the law.”

The parents have formed a group, Bereaved Parents for Online Safety, which will campaign for greater protections for children on social media.

Baroness Kidron said: “These families suffer agony trying to uncover what their children were looking at in the days and weeks leading up to their deaths, and how much of the material was recommended to them through the algorithms used by tech companies to maximise profits.

“We need to end the tech sector’s tactics of obfuscation and create a transparent, independent process for all to avoid further tragedies of this kind.”

Five families now trying to warn others

Five bereaved families have come together to form a new group to campaign for online safety after social media platforms played a major part in their children’s deaths - by suicide or murder.

Their first demand focuses on the need for new laws to ensure grieving parents - and coroners - get access to their children’s social media accounts, so they can understand how and why they died.

They will present their case in Westminster on Monday, as the Online Safety Bill finally returns to the House of Commons to complete its report and committee stages, which have been delayed from July.

Frankie Thomas

For Judy and Andy Thomas, there remain outstanding questions over the death of their 15-year-old daughter Frankie locked in her social media accounts. She took her own life at home in Sept 2018 after a “normal” day at school, with no apparent motive.

“We were devastated and in total shock. Frankie had no previous history of suicide attempts and we were at an absolute loss as to why she had done this,” said Andy, a 61-year-old IT consultant, and Judy, a 64-year-old retired music teacher.

It subsequently emerged she had spent more than two hours that day, out of lessons, on a school iPad unsupervised, accessing material about violent rape and self-harm, as well as fictional stories on Wattpad featuring her favourite band and which ended in suicide.

As with the case of Molly Russell, the 14-year-old who took her own life after being bombarded with self-harm and suicide content, the coroner concluded failures by Frankie’s school and the Wattpad social media platform to protect her from online harms “more than minimally contributed” to her death by suicide.

However Wattpad, a US company outside the UK’s legal jurisdiction, refused to participate in the inquest as an “interested party” - providing the family with only “very limited information”, said her parents.

The Thomases have also been denied access to Frankie’s Instagram account. Her suicide note contained the name of a stranger and the family have been desperate to find out who that person is and whether they played a role in their daughter’s suicide. They have been writing to Instagram since 2020 to establish whether Frankie met the unknown person on the platform, but have been refused access to her account on privacy grounds.

“We have pointed out both to Instagram and various government ministers that this is a dangerous practice which may protect anyone malicious communicating with a child, since they know the material on the child’s account cannot be disclosed,” said the Thomases.

“If by some means we could see the content, we would just be thankful if nothing of significance was found and that this could be put to rest. It would help us to find some closure.”

Molly Russell

Molly Russell’s father, Ian, admits it would have been easy for the family to give up and accept that little or no digital evidence would be provided to answer their questions about the online experiences that drove their 14-year-old daughter to take her life.

“Instead, with the support of many, we resolved to keep pushing for the data required to learn lessons, improve online safety and save lives,” he said.

It took five years before the full scale emerged, with data revealing Molly received 16,000 “destructive” posts encouraging self-harm, anxiety and even suicide in her final six months.

The coroner concluded she died from an act of self-harm while suffering from depression and “the negative effects of online content” that had “more than minimally contributed” to her death.

At the heart of the inquest were the algorithms that drove the content to Molly - and which would, under the proposed amendments, be included in the material that social media companies would have to divulge to parents and the coroner under powers handed to the online regulator Ofcom.

A new offence of delaying the disclosure of evidence, documents or algorithms to any investigation by Ofcom or the coroner would be created under an amendment to the Coroners and Justice Act. This would mean social media bosses would face up to a year in jail and fines of up to 10 per cent of their companies’ global turnover.

Sophie Parkinson

Ruth Moss, a clinical research nurse, has retained on hard drive some of the suicide and depressive content that she discovered her daughter, Sophie, had viewed before she took her life at the age of 13.

It was only possible because Ruth managed to persuade Sophie to provide her mother with her social media account passwords after an online grooming attempt by a 31-year-old man.

“It was mainly after Sophie’s death that the full extent of her internet use became clear and it was harrowing,” said Ms Moss. “She viewed material on both well-known and lesser known social media sites that showed her how to die quickly and different methods, including how she died.

“I actually morbidly saved it. I still have it on hard drive because I was just so horrified at it. I thought someone needs to look at this and ask: ‘Is this acceptable for children to see?’

“The vast majority of the material she viewed would be considered ‘harmful but legal’ but it’s the quantity that she got, a steady drip feed of content. It might not be deemed fatal, but in the amount that she saw it was very damaging.”

Ms Moss had implemented full parental controls at home but, once out of the house, it was hard to control. “The internet is ubiquitous and it became impossible as a parent to control the internet on my own,” she said.

“I had had discussions with both of my children about safe internet use and limited their use of online devices. But, it was clear that I was losing the battle against the internet providers.

“If something online was removed, other harmful material would replace it. And Sophie was fed more and more of it, through their algorithms.”

Breck Bednar

Lorin LaFave, whose 14-year-old son Breck Bednar was groomed and murdered by a youth he met online, said her family’s grief had been compounded by internet trolls contacting her children on social media pretending to be their dead brother.

It followed similar messages that her daughter, Chloe, received purporting to be from Breck’s killer, 18-year-old Lewis Daynes - who befriended her brother on online gaming platforms before luring him to his flat, where he murdered him.

However, both the social media firm and messaging app refused to identify who was behind the contacts on the grounds of privacy.

“Can anyone even imagine what it is like to have your brother who has been murdered contacting you via social media and saying some really graphic and horrible things?” she said.

“And yet again police are unable to access them, or it’s too costly or too lengthy and these trolls get away with it. As a parent, we want access to anything that harms our children, especially if it is something that could save them.”

Olly Stephens

The social media data of the three children convicted of Olly Stephens’ killing comprised 69,500 pages alone, taken from 11 different platforms and 40 different devices.

He was just 13 years old when he was lured from home by a 14-year-old girl he knew for what he thought would be a one-to-one chat - only be ambushed by two boys aged 13 and 14, the younger of whom was armed with a knife. Olly was stabbed twice and died at the scene.

Because it was a criminal murder inquiry, police were able to compile a forensic digital account of the 649 “events” in a timeline that led to Olly’s death.

His parents, Amanda and Stuart, said: “As parents we were completely naive to what was happening on social media, for Olly, until we saw the evidence in court.

“We now raise awareness to ensure that parents know and can try and protect their children as we didn’t. We campaign to ensure that the Online Safety Bill gets passed as quickly as possible and that social media companies are bought to account. The violent language and images go ungoverned and allow arguments online to escalate to murder.”

A spokesperson for Wattpad said: 'Wattpad did participate in the inquest, providing a witness statement with comprehensive information about the platform, content guidelines and restrictions, and moderation activities.

Yahoo Movies

Yahoo Movies